- Home

- Details

- Registry

- RSVP

- Gx works 2 full version

- Back to the future part iii genesis box art

- Adobe photoshop cc 2018 price

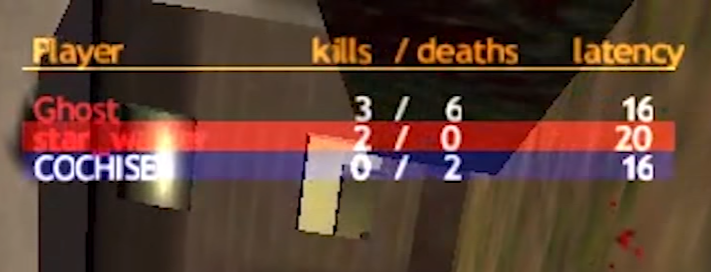

- Cs condition zero mute bots

- Corel videostudio pro x6 review cnet

- Gta 1 cities

- Real gangster crime 2 play online

- Dollar general application

- Sukhmani sahib path download pdf

- Mystery pi new york

- A reasonableness check is a data validation check that

- Vigilante 8- 2nd offense

- Watch dogs 2 release date

- Command and conquer red alert 2 windowed mode

- Save wizard ps4 max edit friends save

- Jojos fashion show review

- Install internet download manager in uc browser

This has important implications for studies of HRI as a robotic peer may have different motivations, preferences or intentions to their human partner. By establishing procedural equality between humans and robots we aimed to be able to recreate a cooperative relationship typically experienced in human-human studies of cooperation. In this study, we examined interactions with a robot peer, a machine entity that had the same role, task, and decision-making ability as their human compatriot.

However, in the previous studies, the role of the robot was typically subordinate or complementary, limited to assisting or complementing human teammates in their task. With the widening usage of robots, their role has moved from that of a complex automated tool, to include situations where they can operate as a teammate able to assist humans in the completion of joint tasks. Research in this area has focused upon the design factors, resemblance and socio-cognitive skills that enhance social relations with robots. Over the last decades, robotic agents have become sophisticated to the extent that anthropomorphism could lead us to accept them as our peers. Of interest to this study is the extension of this cooperative relationship beyond the HHI. That is, cooperation is enhanced only when there are the understanding and the willingness to establish a common ground, engendering a high level of trust in the partner. Cooperation is conditional upon the expectation of reciprocation. Therefore, to cooperate effectively it is necessary to arrive at an understanding of the others' behaviour such that equal and fair allocation of effort and resources lead to a joint solution that is beneficial to both parties. Oskamp proposed that there can be no positive social relationship or outcome from cooperation unless both parties adopt a cooperative attitude. When proposing a suggestion or a solution to a problem, for example, people look for others' approval, thus indicating that a final decision needs to be found together. Consequently, we are constantly checking others behaviour, interpreting and producing or changing our response to it. Most of our decisions are depending on social interactions and therefore are based on concomitant choices of others. Taken together, these results suggest that proper cooperation in HRI is possible but is related to the complexity of the task.Īlthough cooperation is not unique to our species, human cooperation has the scale and scope far beyond other mammals and forms a central underpinning of our psychology, culture, and success as a species.

A mute and non-interactive robot is preferred with a high payoff, while participants preferred a more human-behaving robot in conditions of low payoff. We also discovered two specific robotic profiles capable of increasing cooperation, related to the payoff. We found that cooperation in Human-Robot Interaction (HRI) follows the same rule of Human-Human Interaction (HHI), participants rewarded cooperation with cooperation, and punished selfishness with selfishness. We measured the participants' tendency to cooperate with the robot as well as their perception of anthropomorphism, trust and credibility through questionnaires. We explored how people establish cooperation with robotic peers, by giving participants the chance to choose whether to cooperate or not with a more/less selfish robot, as well as a more or less interactive, in a more or less critical environment.

- Home

- Details

- Registry

- RSVP

- Gx works 2 full version

- Back to the future part iii genesis box art

- Adobe photoshop cc 2018 price

- Cs condition zero mute bots

- Corel videostudio pro x6 review cnet

- Gta 1 cities

- Real gangster crime 2 play online

- Dollar general application

- Sukhmani sahib path download pdf

- Mystery pi new york

- A reasonableness check is a data validation check that

- Vigilante 8- 2nd offense

- Watch dogs 2 release date

- Command and conquer red alert 2 windowed mode

- Save wizard ps4 max edit friends save

- Jojos fashion show review

- Install internet download manager in uc browser